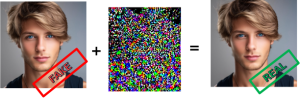

Artificial Intelligence (AI) is no longer just a technological revolution, but it has become a powerful tool for influencing opinions, and distorting reality. Anyone can download an app, tap a few buttons, and create a fake image or video of astonishing quality. Facial expressions can be recreated, identities replaced, and entire scenes convincingly fabricated. This opens the door to misinformation, political manipulation, personal defamation, and large-scale social harm. As manipulated media travels across social networks at unprecedented speed, society faces a fundamental question: can we still trust what we see?

The project “Robust AI solutions for dependable Media analysis” (REMEDY) is dedicated to building the next generation of robust, secure, and reliable forensic tools for authenticating digital media.

Professor Benedetta Tondi, Associate Professor of the Department of Information Engineering and Mathematical Sciences, tells us about it.

What is REMEDY about?

“Modern tools for authenticating media uses Deep Learning, i.e., the technology at the basis of modern Artificial Intelligence, to detect tampering in images and videos. These AI-based detectors are powerful, but they face dangerous challenges: attacks can be designed to fool them. With simple techniques – carefully crafted perturbations or ,in some cases, even just standard the application of standard processing – malicious users can modify digital media in ways that make forgeries appear real to state-of-the-art detectors.

Hence, the core problem is; Deep Learning is incredibly capable, but also incredibly fragile.

A tiny ad-hoc perturbation, imperceptible to the human eye, can trick a detector into believing a fake image is real.

REMEDY aims to break this cycle by developing intrinsically robust forensic tools that can withstand both intentional attacks and unintentional degradations (compression, social-media processing, etc.).”

What challenges does REMEDY address?

“The project addresses three major research questions. First, it investigates which Deep Learning architectures are naturally harder to fool. Different types of Deep Learning models, e.g. Convolutional Neural Networks (CNNs) and Vision Transformers (ViT), analyze images in different ways. REMEDY compares these approaches to understand which ones hold up better when someone tries to trick them, and whether combining them can offer even stronger protection.

Furthermore, the research explores how detectors can focus on what matters in an image. Fake images usually alter only part of a scene: a face, an object, a small region. REMEDY aims to build detectors that pay attention to semantic aspects instead of relying on tiny, fragile clues. By grounding the analysis in content and semantics, detectors become much harder to deceive.

Finally, REMEDY addresses how to protect detectors when attackers strike back. Attackers are smart and also look for ways to bypass the detectors meant to catch them. The project investigates strategies to enhance detector robustness by employing security-oriented training procedures and introducing controlled randomization mechanisms that obscure portions of the model’s internal structure from potential attackers. This makes it significantly harder for attackers to predict and fool them, while still keeping the detectors accurate and reliable.”

What impact could REMEDY have?

“REMEDY will provide more trustworthy digital media environments and forensic detectors capable of withstanding real-world adversaries . Furthermore, the project aims to deliver tools designed to survive the rapid evolution of AI-based attacks, as well as new guidelines for designing secure forensic systems . In an era where digital images influence politics, justice, and public perception, REMEDY contributes to a crucial societal mission: defending truth in a world where falsification has never been easier. To this end, REMEDY attempts not only to keep pace with advancing AI technologies, but also to build solutions that remain reliable even as the landscape continues to evolve.”

Who is participating in the REMEDY project?

“The Remedy project, funded by PSR 2024, involves researchers from the Department of Information Engineering and Mathematical Sciences of the University of Siena belonging to the VIPP group (Visual Information Processing and Protection). The research interests of the VIPP group span the whole area of Multimedia Security, with a particular focus on the protection and authentication of visual information. The expertize area of the VIPP group include AI application to digital forensics, data hiding and watermarking, adversarial machine learning and AI security.”